Posted by Paris Hsu – Product Manager, Android Studio

We shared an exciting live demo of the Developer Keynote at Google I/O 2024 where Gemini transformed a wireframe sketch of an app's UI into Jetpack Compose code, right inside Android Studio. While we're still refining this feature to ensure you get a great experience in Android Studio, it's built on foundational Gemini capabilities that you can experiment with today in Google AI Studio.

We will specifically focus on:

- Converting designs into UI code: Convert a simple image of your app's user interface into working code.

- Smart UI solutions with Gemini: Get suggestions on how to improve or fix your user interface.

- Integrate Gemini prompts into your app: Simplify complex tasks and streamline the user experience with custom prompts.

Note: Google AI Studio offers several generic Gemini models, while Android Studio uses a custom version of Gemini that's optimized specifically for developer tasks. While this means these generic models may not offer the same depth of Android knowledge as Gemini in Android Studio, they do provide a fun and engaging playground to experiment and gain insight into the potential of AI in Android development.

Experiment 1: Converting designs into UI code

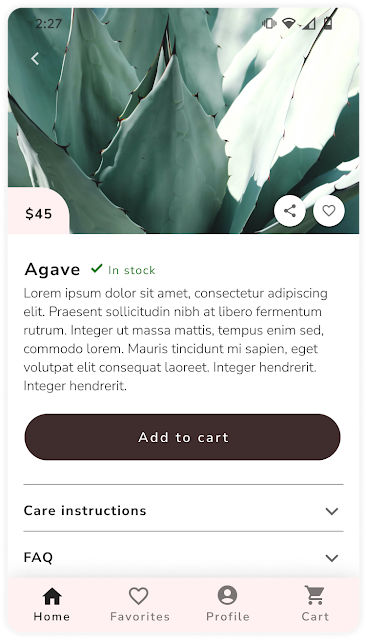

First, to translate designs into Composing UI code: Open the chat prompt section of Google AI Studio, upload an image of your app's UI screen (see example below), and enter the following prompt:

“Work like an Android app developer. Use Jetpack Compose to build the screen for the provided image, so that the Compose Preview is as close to that image as possible. Also make sure to include imports and use Material3.”

Then click “run” to execute your query and see the generated code. You can copy the generated output directly to a new file in Android Studio.

This experiment allowed Gemini to extract details from the image and generate corresponding code elements. For example, the original image of the plant details screen contained a “Care Instructions” section with an expandable icon. Gemini’s generated code contained a expandable card specific to plant care instructions, demonstrating its contextual knowledge and code generation capabilities.

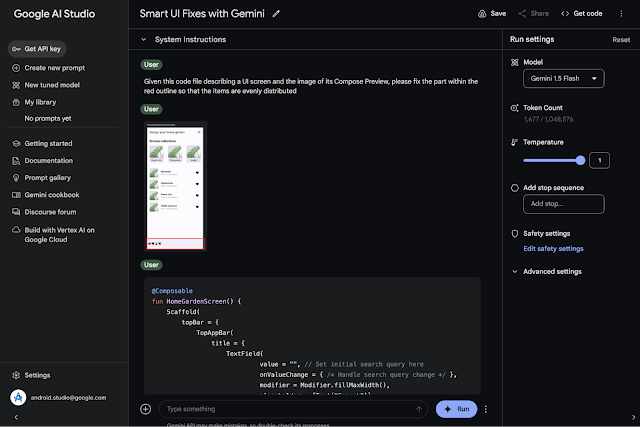

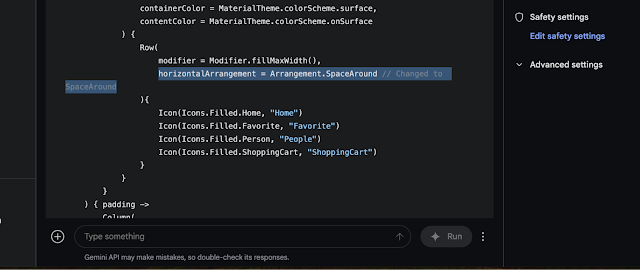

Experiment 2: Smart UI solutions with Gemini in AI Studio

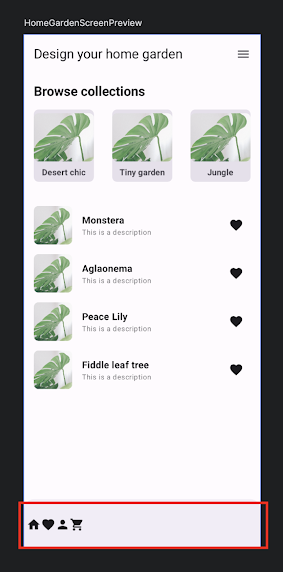

Inspired by “Circle to searchAnother fun experiment you can try is to circle problem areas on a screenshot, along with the relevant Compose code context, and ask Gemini to suggest appropriate code solutions.

You can explore this concept in Google AI Studio:

1. Upload Compose code and screenshot: Upload the Compose code file for a UI screen and a screenshot of the Compose example, with a red outline highlighting the issue. In this case, these are items in the bottom navigation bar that should be evenly spaced.

Experiment 3: Integrating Gemini Prompts into Your App

Gemini can streamline experimentation and development of custom app features. Imagine you want to build a feature that gives users recipe ideas based on an image of the ingredients they have on hand. In the past, this would have required complex tasks such as hosting an image recognition library, training your own ingredient-to-recipe model, and managing the infrastructure to support it all.

Now, you can achieve this with Gemini using a simple, custom prompt. Let's see how you can add this “Cook Helper” feature to your Android app as an example:

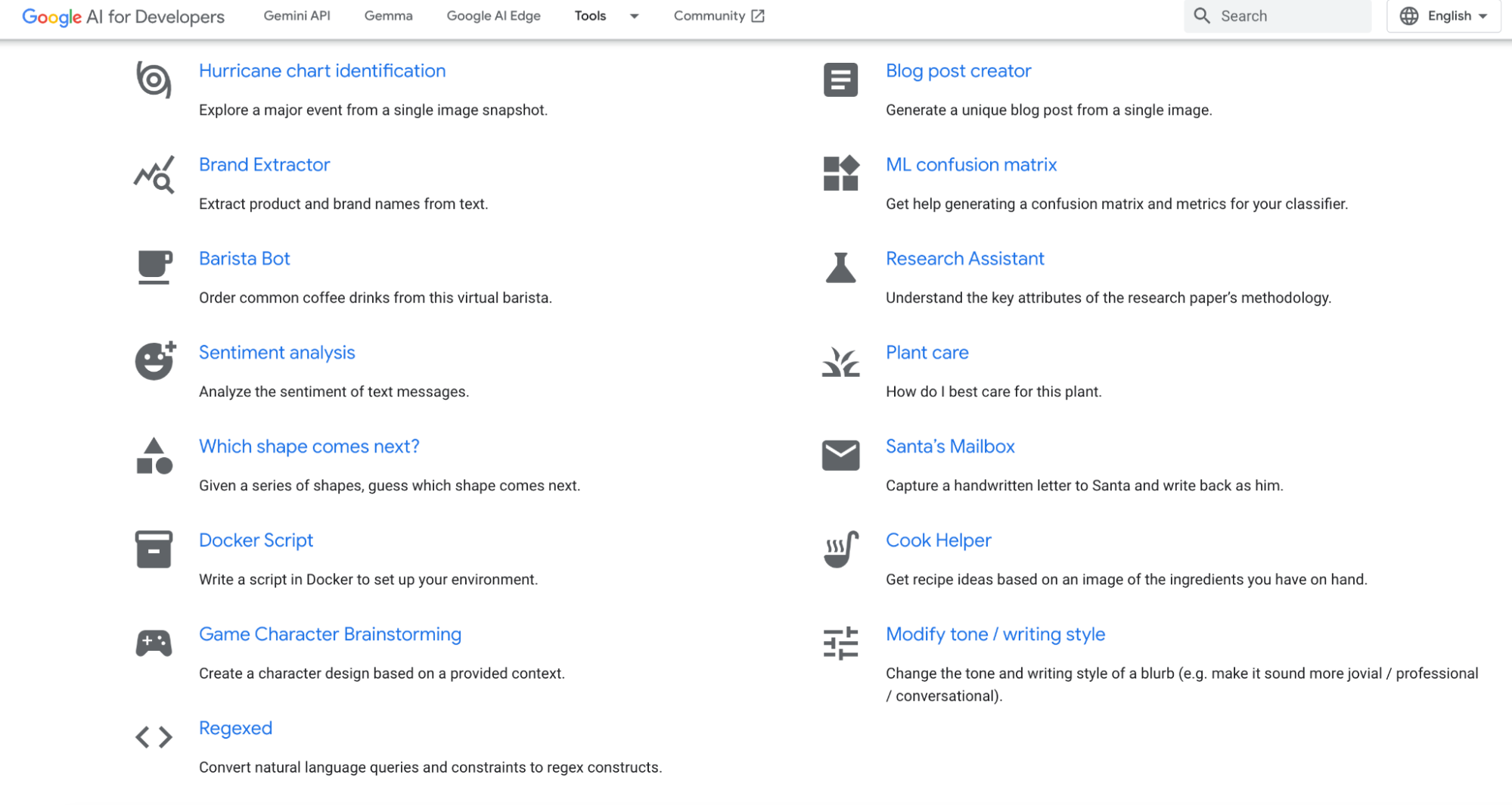

1. Discover the Gemini prompt gallery: Explore sample prompts or create your own. We'll use the “Cook Helper” prompt.

2. Open and experiment in Google AI Studio: Test the prompt with different images, settings, and models to ensure the model responds as expected and the prompt matches your goals.

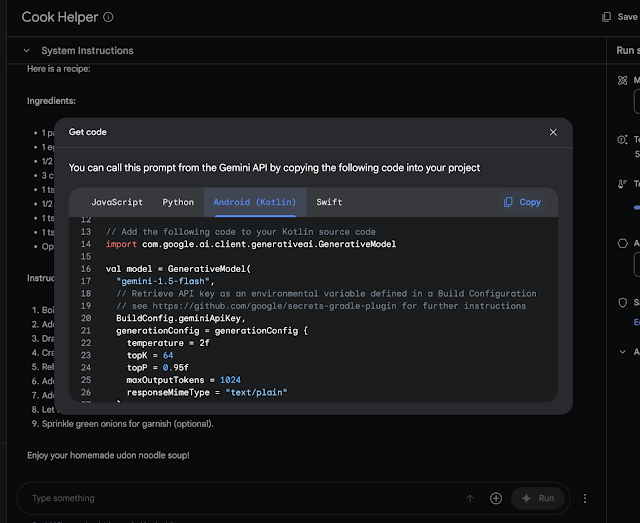

3. Generate the integration code: Once you are satisfied with the performance of the prompt, click “Get Code” and select “Android (Kotlin)”. Copy the generated code snippet.

4. Integrate the Gemini API into Android Studio: Open your Android Studio project. You can new Gemini API app template delivered within Android Studio or follow this tutorialPaste the copied generated prompt code into your project.

That's it – your app now has a working Cook Helper feature powered by Gemini. We encourage you to experiment with different sample prompts or even create your own custom prompts to enhance your Android app with powerful Gemini features.

Our approach to bringing AI to Android Studio

While these experiments are promising, it’s important to remember that large language model (LLM) technology is still evolving, and we’re learning as we go. LLMs can be non-deterministic, meaning they can sometimes produce unexpected results. That’s why we take a cautious and thoughtful approach to integrating AI features into Android Studio.

Our philosophy around AI in Android Studio is to empower the developer and keep them in the loop. Especially when the AI is making suggestions or writing code, we want developers to be able to carefully review the code before putting it into production. That's why, for example, the new Code suggestions The feature in Canary automatically opens a diff view so developers can see how Gemini suggests modifying your code, rather than blindly applying the changes immediately.

We want to ensure that these features, such as Gemini in Android Studio thoroughly tested, reliable and truly useful to developers before we implement them in the IDE.

What now?

We invite you to try these experiments and share your favorite assignments and examples with us using the #AndroidTwinsEra tag on X and LinkedIn as we continue to explore this exciting frontier together. Also follow Android Developer on LinkedIn, Medium, YouTubeor X for more updates! AI has the potential to revolutionize the way we build Android apps, and we can’t wait to see what we can create together.